New autonomous car tech can gaze' at the world like humans

AEye's new technology can help improve autonomous cars prioritize obstacles like human eyes, making them more efficient.

You must have often heard that the human eye is the best form of image capturing technology in the world. Therefore, camera manufacturers are always in the pursuit of tweaking their products to match the way our eyes behave in real-world scenarios. The best iterations of cameras are joining the pursuit of technology and automobile firms to make autonomous mobility a reality. However, autonomous cars are more than just a few sensors and a computer mounted on top of a car.

The basic concept behind driver-less cars is pretty basic — the computers need to ‘see’ (analyse) the road and use clever algorithms to drive between them in a way that is considered to be safe by human standards. Up until now, all the autonomous vehicles you have seen equip lidar sensors to get a view of the road ahead. However, the quality of lidar sensor varies — some expensive ones can scan the surroundings in 320-degrees whereas the affordable solid-state lidar units are only suitable for slow urban conditions.

However, cheaper technology can be put to good use through clever implementation. An athlete and a commoner have been God-gifted similar eyes but both use it in completely different ways — the former uses it cleverly in a fraction of seconds to jump hurdles whereas the latter may use it luxuriously to see around and look for an alternate route. AEye, an AI startup, is following the athlete's way and using affordable sensors to let the vehicle’s computer focus on the road ahead.

While driving, the human mind focuses on the road ahead while staying alert of dangers looming in from the surroundings. AEye’s system is following a similar approach by designing their algorithms in a way so that the computer focuses its ‘laser vision’ on a particular angle of the road while staying aware of the surroundings for pedestrians and roadside obstacles. The company says that their AI-enabled system is able to see as far as 300 meters with an angular resolution as small as 0.1 degrees.

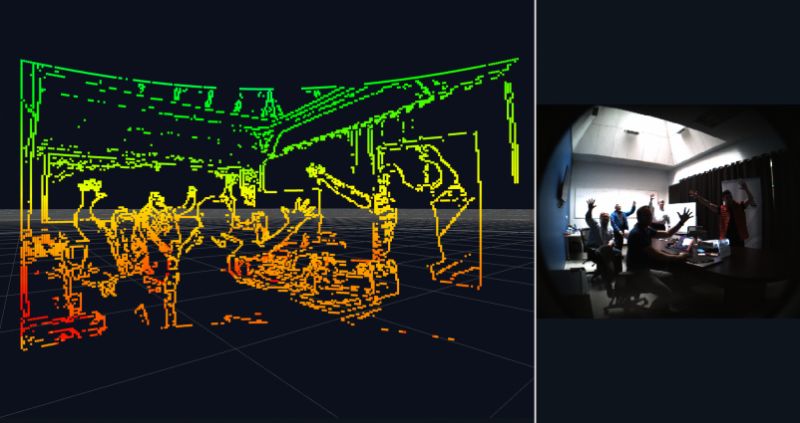

The system can scan certain areas of its field of vision in high resolution whereas the ‘not-so-important’ bits can be scanned in lower resolution, thereby effectively utilise the hardware. “You can trade resolution, scene revisit rate, and range at any point in time,” Luis Dussan says in an interview to MIT Technology Review. “The same sensor can adapt.” The system can also put colour to raw lidar images, which can help the system understand key human visual messages such as brake lights and indicators.

Therefore, while driving on the highways, the system can focus on cars, trucks and oncoming traffic while staying alert of the roadside obstacles. In urban areas, the system will balance its focus equally in the complete field of vision, shifting its focus occasionally to scan for any obstacles that have been missed.

Will this make autonomous technology more affordable? Luis Dussan says that “if you compare true apples-to-apples, we’re going to be the lowest-cost system around.” AEye’s system will require multiple 70-degree field-of-view sensors to be fitted on a car to cover a 360-degree view, which is bound to raise the costs. However, with AI on board, autonomous cars can perceive like humans and make themselves more efficient in the real world. Will they be safer? Humans are known to make mistakes on the road and we can only expect the system to perform the way they are programmed to be.

(source)